Ultimate Guide to Web Scraping: Tools & Python (4 Steps)

TL;DR

Screen scraping a web page involves extracting data using tools like Web Scraper Chrome Extension, ParseHub, or by writing Python code with libraries such as Beautiful Soup and Scrapy. It's a process used for various applications like price monitoring and lead generation, offering both manual and automated solutions.

Choosing the right tool or library depends on your specific needs and technical skills.

Automate your data extraction tasks with Bardeen to streamline competitive analysis, market research, or data collection for machine learning projects.

Screen scraping, a technique for extracting data from websites, is an essential tool for data extraction and automation. In this step-by-step guide, we'll walk you through the process of screen scraping a web page using Python and popular libraries like BeautifulSoup and Selenium. We'll cover the tools, setup, and practical examples you need to efficiently gather data for competitive analysis, price monitoring, and data aggregation while addressing legal and ethical considerations.

Introduction to Screen Scraping

Screen scraping is a technique for extracting data displayed on a screen, which differs from web scraping that focuses on extracting data from websites. Screen scraping enables the collection of visual data as plain text from various sources, including desktop applications, websites, and even legacy systems. This method automates the process of gathering information, making it significantly faster and more efficient than manual data collection.

The significance of screen scraping lies in its ability to facilitate data extraction and automation across a wide range of use cases, such as:

- Competitive analysis: Monitoring competitor prices, product offerings, and strategies

- Price monitoring: Tracking price fluctuations for products or services across multiple platforms

- Data aggregation: Collecting and consolidating data from various sources for analysis or reporting

By leveraging screen scraping, businesses can gain valuable insights, streamline processes, and make data-driven decisions more effectively. As we explore the tools, techniques, and practical examples in the following sections, you'll discover how to harness the power of screen scraping for your own data extraction and automation needs.

Tools and Technologies for Screen Scraping

When it comes to screen scraping, there are several popular tools and libraries available to streamline the process. Some of the most widely used options include:

- BeautifulSoup: A Python library that simplifies the parsing and extraction of data from HTML and XML documents.

- Selenium: A powerful tool for automating web browsers, allowing you to interact with web pages and extract data, even from dynamically loaded content.

- Scrapy: A comprehensive web scraping framework for Python that provides a complete set of tools for extracting data from websites.

Each tool has its own strengths and is suited for different types of web pages. BeautifulSoup excels at parsing and navigating HTML documents, making it ideal for scraping static web pages. Selenium, on the other hand, is particularly useful for scraping dynamic websites that heavily rely on JavaScript to load content. Scrapy offers a robust and scalable solution for large-scale web scraping projects.

For users without a coding background, there are also non-programmatic tools available. Octoparse and ParseHub are examples of visual web scraping tools that allow you to extract data through a point-and-click interface, without the need for programming skills.

With Bardeen, you can automate screen scraping tasks quickly and save time. Check out our scraping integration for more details. No coding needed.

Setting Up Your Environment for Scraping

Before you start web scraping with Python, it's essential to set up your environment with the necessary tools and libraries. Here's a step-by-step guide:

- Install Python: Make sure you have Python installed on your system. You can download the latest version from the official Python website (https://www.python.org) and follow the installation instructions for your operating system.

- Set up a virtual environment (optional but recommended): Creating a virtual environment helps keep your project dependencies separate from your system-wide Python installation. You can create a virtual environment using the following command:

python -m venv myenv

Activate the virtual environment:source myenv/bin/activate

- Install required libraries: Install the necessary libraries for web scraping, such as requests and BeautifulSoup, using pip. Open your terminal or command prompt and run the following commands:

pip install requests beautifulsoup4

- Familiarize yourself with browser developer tools: Most modern web browsers come with built-in developer tools that allow you to inspect the HTML structure of a web page. This is crucial for identifying the elements you want to scrape. In Chrome or Firefox, you can access the developer tools by right-clicking on a page and selecting "Inspect" or by pressing Ctrl+Shift+I (Windows) or Cmd+Option+I (Mac).

With your Python environment set up and the necessary libraries installed, you're ready to start writing your web scraping code. Remember to refer to the documentation of the libraries you're using for more detailed information on their usage and features.

Extracting Data: Practical Examples

Now that you have your environment set up, let's dive into some practical examples of extracting data from web pages using Python and BeautifulSoup.

- Extracting prices from an e-commerce site:

- Identify the HTML elements that contain the price information using the browser's developer tools.

- Use BeautifulSoup to parse the HTML and locate the specific elements:

from bs4 import BeautifulSoup import requests url = "https://example.com/products" response = requests.get(url) soup = BeautifulSoup(response.content, 'html.parser') prices = soup.find_all('span', class_='price') for price in prices: print(price.text)

- Scraping event details from a website:

- Inspect the event elements to determine their structure and relevant attributes.

- Use BeautifulSoup to extract the desired information:

events = soup.find_all('div', class_='event') for event in events: title = event.find('h3').text date = event.find('p', class_='date').text location = event.find('span', class_='location').text print(f"Title: {title}\nDate: {date}\nLocation: {location}\n")

- Handling pagination:

- Check if the page has a "next" or "load more" button.

- If present, extract the URL or necessary parameters for the next page.

- Modify your code to iterate through the pages until no more pages are available:

while True: # Extract data from the current page # ... # Check for the next page next_page = soup.find('a', class_='next-page') if next_page: url = next_page['href'] response = requests.get(url) soup = BeautifulSoup(response.content, 'html.parser') else: break

- Dealing with dynamically loaded content (using Selenium):

- Install Selenium and the appropriate web driver for your browser.

- Use Selenium to load the page and wait for the dynamic content to appear:

from selenium import webdriver from bs4 import BeautifulSoup driver = webdriver.Chrome() # Use the appropriate driver for your browser driver.get("https://example.com") # Wait for the dynamic content to load driver.implicitly_wait(10) soup = BeautifulSoup(driver.page_source, 'html.parser') # Extract data using BeautifulSoup as shown in previous examples driver.quit()

These examples demonstrate the basic techniques for extracting data from web pages using Python and BeautifulSoup. Remember to adapt the code to fit the specific structure and requirements of the websites you're scraping.

Save time on web scraping with our Bardeen AI. Use our Google search result playbook for one-click automation. No coding needed.

Legal and Ethical Considerations in Screen Scraping

When engaging in screen scraping, it's crucial to be aware of the legal and ethical implications to avoid potential legal issues and maintain a good reputation.

Legal Implications and Copyright Issues

Screen scraping can potentially infringe on copyright laws if the scraped content is protected by copyright. It's important to consider the following:

- Scraping copyrighted content without permission may violate the website's intellectual property rights.

- Some websites may have terms of service that explicitly prohibit scraping, and violating these terms could lead to legal consequences.

- Court cases, such as HiQ Labs v. LinkedIn, have set precedents regarding the legality of scraping publicly available data, but the legal landscape is still evolving.

Respecting Robots.txt and Website Terms of Use

To scrape ethically and avoid legal issues, it's essential to respect the website's rules and guidelines:

- Check the website's robots.txt file, which specifies which parts of the site are allowed or disallowed for scraping. Adhere to these guidelines to show respect for the website owner's wishes.

- Review the website's terms of service or terms of use to understand their stance on scraping. If the terms explicitly prohibit scraping website data, it's best to seek permission or find alternative data sources.

Best Practices for Ethical Scraping

Adopt the following best practices to ensure your scraping activities are ethical and respectful:

- Practice rate limiting: Avoid sending too many requests in a short period to prevent overloading the website's servers and disrupting its performance.

- Use a legitimate user agent: Identify your scraper with a custom user agent string that includes your contact information. This transparency helps website owners understand your intentions and reach out if necessary.

- Obtain permission when required: If you plan to scrape sensitive or proprietary data, it's advisable to contact the website owner and seek explicit permission to avoid legal repercussions.

By staying informed about legal considerations, respecting website guidelines, and following best practices, you can navigate the legal landscape of screen scraping and ensure your scraping activities are conducted ethically and responsibly.

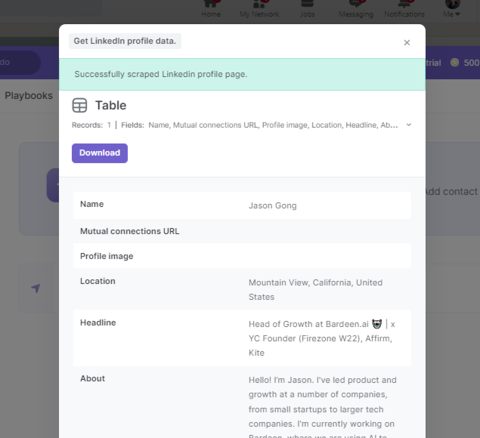

Automate Scraper Tasks with Bardeen Playbooks

Screen scraping a web page can be performed manually or fully automated using Bardeen's integration capabilities. Automating the screen scraping process is invaluable for tasks such as competitive analysis, market research, or data collection for machine learning projects. Here are examples of automations you can build with Bardeen:

- Download full-page PDF screenshots of websites from links in a Google Sheet: This playbook automates the process of capturing full-page PDF screenshots from a list of website links in a Google Sheet, perfect for archiving web pages or conducting visual comparisons of web content over time.

- Get web page content of websites: Extract the content from a list of website links in your Google Sheets spreadsheet and update each row with the content of the website. Ideal for SEO analysis, content aggregation, or competitive research.

- Download a full-page PDF screenshot of a webpage from a link: Simplify the process of capturing a webpage in PDF format for a single website link, streamlining tasks such as documenting online resources or preparing presentations with web content.

Embrace the efficiency of automating your screen scraping tasks with Bardeen. Download the Bardeen app at Bardeen.ai/download to explore these and other powerful automations.

Learn how to find or recover an iCloud email using a phone number through Apple ID recovery, device checks, and email searches.

Learn how to find someone's email on TikTok through their bio, social media, Google, and email finder tools. A comprehensive guide for efficient outreach.

Learn how to find a YouTube channel's email for business or collaborations through direct checks, email finder tools, and alternative strategies.

Learn how to find emails on Instagram through direct profile checks or tools like Swordfish AI. Discover methods for efficient contact discovery.

Learn why you can't find Reddit users by email due to privacy policies and discover 3 indirect methods to connect with them.

Learn how to find someone's email address for free using reverse email lookup, email lookup tools, and social media searches. A comprehensive guide.

Your proactive teammate — doing the busywork to save you time

.svg)

Integrate your apps and websites

Use data and events in one app to automate another. Bardeen supports an increasing library of powerful integrations.

.svg)

Perform tasks & actions

Bardeen completes tasks in apps and websites you use for work, so you don't have to - filling forms, sending messages, or even crafting detailed reports.

.svg)

Combine it all to create workflows

Workflows are a series of actions triggered by you or a change in a connected app. They automate repetitive tasks you normally perform manually - saving you time.

Don't just connect your apps, automate them.

200,000+ users and counting use Bardeen to eliminate repetitive tasks